Check out the conversation on Apple, Spotify, and YouTube.

Brought to you by:

Amplitude - The market leader in product analytics

Jira Product Discovery - Prioritize what matters with confidence

NayaOne - Airgapped cloud-agnostic sandbox to validate AI tools faster

Product Faculty - Get $550 off their #1 AI PM Certification with my link

Today’s episode

LinkedIn just scrapped its APM program and replaced it with an Associate Product Builder track, and introduced a Full Stack Builder career ladder alongside it.

What does it even mean to be a “builder PM”?

Well, tools only get you so far. Learning Claude Code is helpful, but means nothing if you don’t have an understanding of the underlying first principles.

That’s today’s episode.

Mahesh Yadav created one of our most popular episodes, with over 35K views on YouTube, and now he’s back. Earlier, he taught you AI agents.

Today, he’s teaching you how to become a builder PM:

If you want access to my AI tool stack - Dovetail, Arize, Linear, Descript, Reforge Build, DeepSky, Relay.app, Magic Patterns, Speechify, and Mobbin - grab Aakash’s bundle.

I’m giving a free talk on how to get interviews at the top AI PM companies on Thursday (April 23rd) @ 9:00AM PDT. Grab your seat.

Newsletter deep dive

Thank you for having me in your inbox. Here’s the complete guide to “becoming a builder PM.”

What is a “Builder PM”

The Builder PM Tool Stack

n8n

Claude Code

OpenClaw

Mastering AI Agents

The first principles of agents

How to build self-improving agents

The 10-week roadmap to Builder PM

1. What is a Builder PM

Every PM has already used Claude Code or ChatGPT to get something done. But if that’s where it stops, you are not yet a builder PM.

There are two kinds of builder PM, and they’re different jobs.

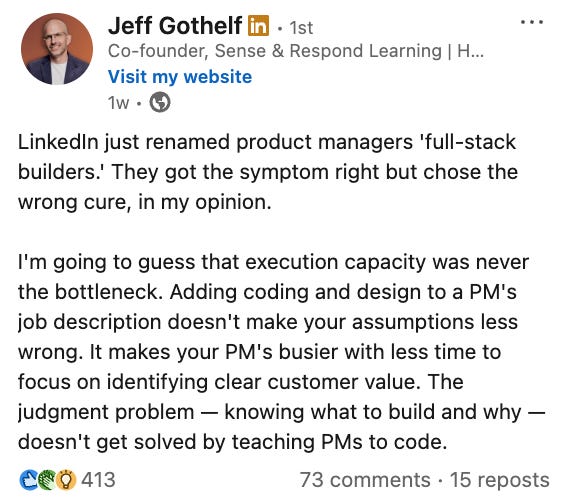

Type one ships customer-facing product without engineering handoffs. Customer to 10 paying users, solo. This is what the LinkedIn APB program is training for and what lands you the comp trajectory Mahesh describes.

Type two builds internal agents that automate their own PM work. PRD reviewers, competitive intel, data dashboards. Same skills, different trajectory: stay in your current org, ship 3x more, get promoted faster.

Most of this guide teaches type two. Type one is the aspirational endpoint.

Why this title change happened now

The title change is a response to something that actually shifted in the last six months.

Six months ago, most autonomous agents broke within minutes. METR’s latest benchmarks show frontier models now sustaining multi-hour jobs, with Opus 4.7 running coding tasks for 3 to 6 hours in practice.

When agents can only run for 3 minutes, the PM’s job is to prompt them. When agents can run for 6 hours, the PM’s job is to design the system those agents run inside.

Designing that system is what builder PM actually means.

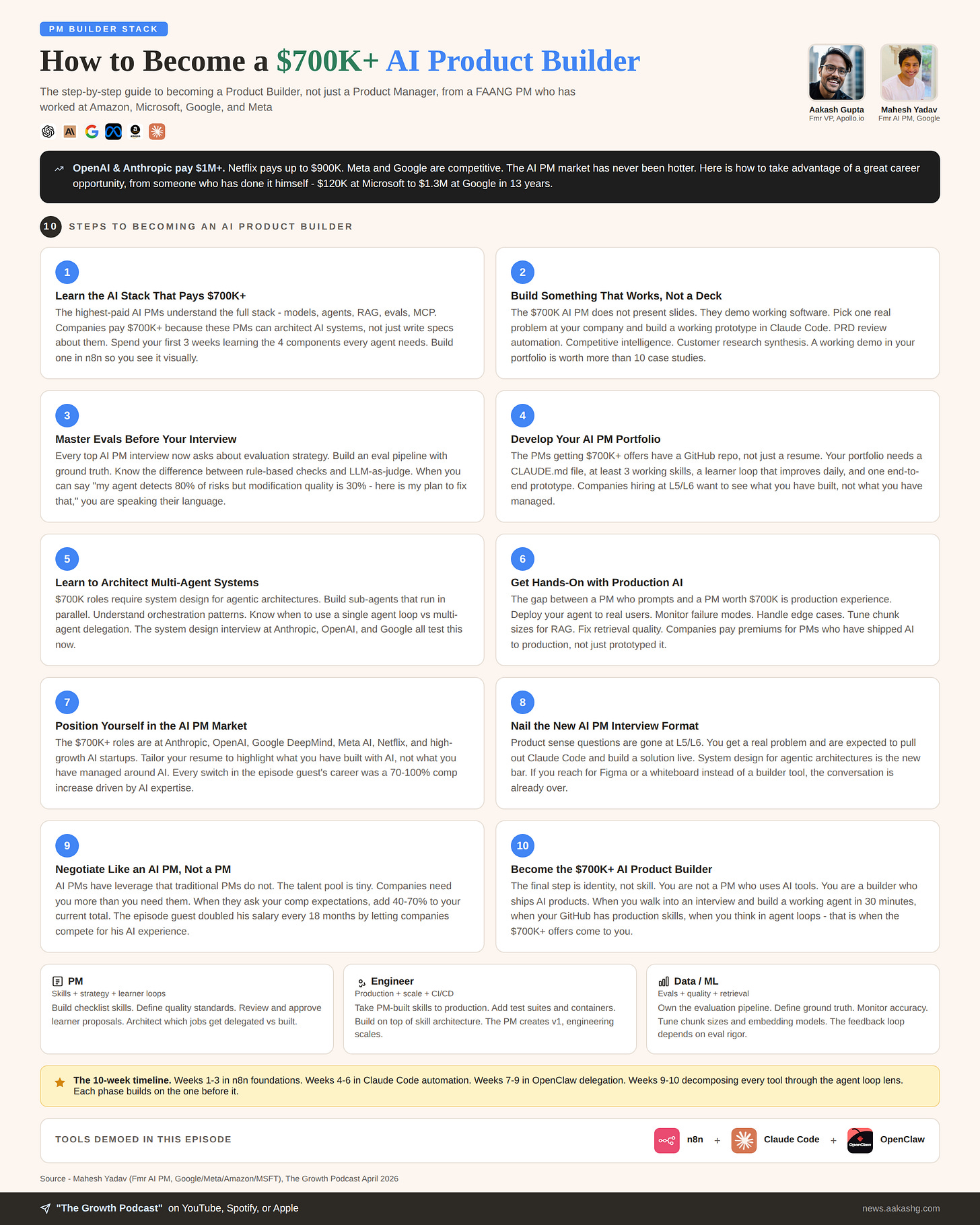

The compensation trajectory, in case you need the motivation

Mahesh’s comp trajectory shows you the top end of what’s possible. He went from $120K at Microsoft to $1.3-1.4M in his last Google role (Senior PM, AI), with a rough doubling every two years. A few of his AI PM friends at Nvidia are at $2-2.5M.

Levels.fyi still pegs median Senior PM comp at top tech around $350-450K. What Mahesh is describing is the premium the US tech market pays specifically for builder-skill AI PMs, where companies approach you and offer 30-40% over your current number. The $2M+ tier is a small group.

International markets pay differently - builder skills still move comp in EU, India, and APAC, just with different absolute numbers and different negotiation dynamics. And if you’re in mission-driven PM work where comp isn’t the yardstick, the same builder skills still let you ship 3x more impact with the same team - which is the whole point.

You probably won’t hit $1.4M next year. The premium exists, though, it’s real, and the gate is builder skills.

One honest caveat for managers reading this. If your PMs get 10x leveraged, the obvious question is whether you need fewer of them. The early signal from LinkedIn’s restructure is that the answer is smaller pods with broader scope, not layoffs - they’re keeping headcount and pushing each builder to own more surface area. That may or may not hold elsewhere. Worth naming, because every VP Product on this list is already thinking about it.

The failure mode

Here’s the trap: PMs read about Claude Code on Twitter, try it for a weekend, get a mediocre output, and conclude “this is overhyped.”

They skipped the first principles.

That’s the answer to Gothelf. He’s right that execution capacity was never the bottleneck. Judgment was. Judgment sharpens when you ship 12 prototypes a year instead of 1. The builder PM still talks to customers. They just ship without waiting.

That’s what the rest of this guide is going to give you.

When building is the wrong bet

Not every PM job benefits from this shift. A few honest cases where the 10-week investment doesn’t pay off:

If you work in trust & safety, healthcare decisions, financial underwriting, or legal compliance, your job is to prevent errors, not to generate throughput. An agent that ships 12 prototypes a year doesn’t help you. An agent that proposes a new UI flow in a payments product can actively hurt you. Build evals skills instead.

If your product lives inside a regulated perimeter and your company has a year-long procurement cycle for new tools, you’ll spend your 10 weeks waiting for IT approval. Spend the time on AI literacy - reading papers, running evals on existing outputs - rather than on personal tool setup.

If you’re a founder with zero PMs under you, skip the “automate your PRD reviews” framing entirely. Your version is shipping real product to real customers using these same tools. The skills transfer. The PM-productivity examples don’t.

Builder PM is the right bet for most mid-career PMs at product-led companies with data portability. That’s a large slice of the market, not all of it.

2. The Builder PM Tool Stack

Three tools. Three different jobs. Using the wrong one for the wrong stage of your journey will waste weeks.

Pick based on where you are in the journey, ignoring whichever tool has the most Twitter hype this week.

n8n

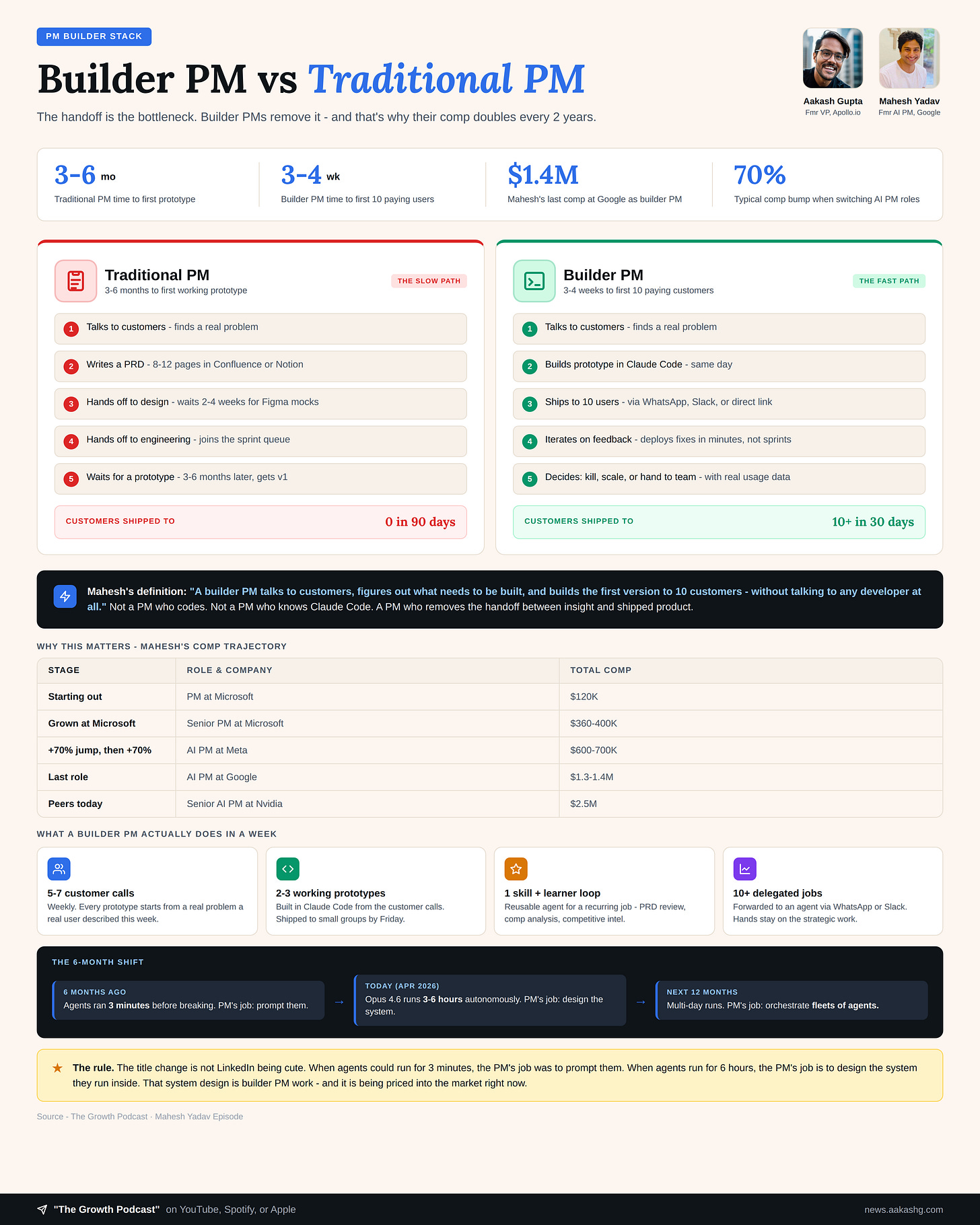

n8n is a visual workflow builder. You drag components onto a canvas and connect them. That’s it.

Everyone who is deep in AI dismisses n8n as “too basic.” They are wrong. n8n is irreplaceable as a learning tool because you physically see every piece of the agent architecture as separate nodes.

In the episode, Mahesh demoed building a contract analyzer in n8n:

Email trigger hits when someone sends a contract

2. Gmail node pulls the MSA (master service agreement)

Data loader converts the file to text

Text splitter chunks it into 1,000-character pieces with 200-character overlap

Embedding model converts chunks to vectors

Vector database stores them

AI agent reads the playbook and flags risks

Gmail node emails back the analysis

Eval workflow runs against ground truth

Every one of those is a visible node on a canvas. When something breaks, you see exactly where.

The limitation is real. n8n has no code mode, no version control, no test suites, and no path to production beyond a simple webhook. It stops you around 10 customers.

For most builder PMs, use n8n for 2-3 weeks, then move on. The exception is simple internal workflows with clear webhooks and no collaboration needs - those can live in n8n forever and that’s fine. The “graduate from n8n” rule is about your learning trajectory, not about n8n being bad at its job.

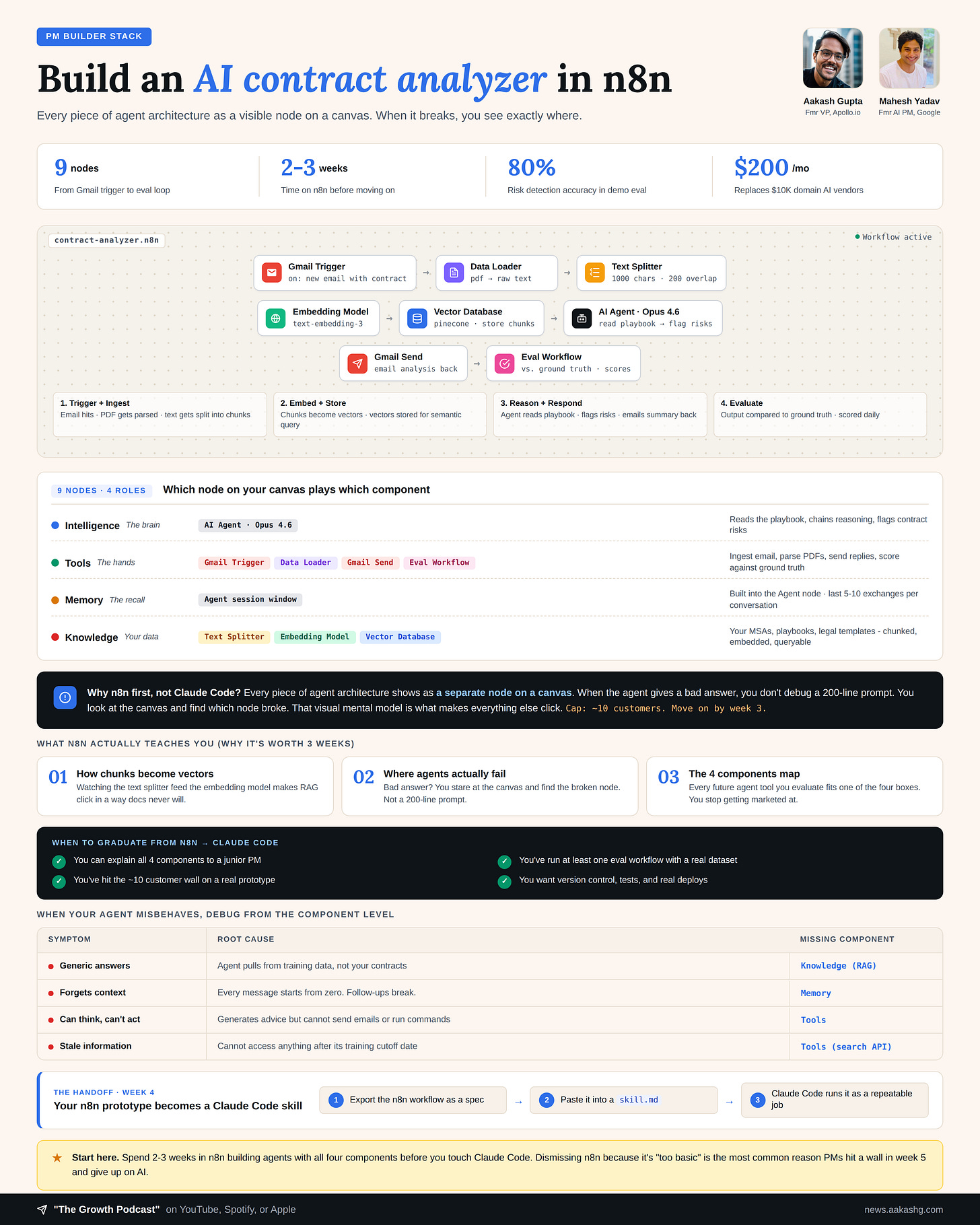

Claude Code

Claude Code is where you build the real thing.

The same tool works for a PM with zero coding experience building skills in English, and for a senior engineer building production services on top of that PM’s work.

Mahesh showed his PRD review setup in the episode. Here is what it does:

He drops a PRD into a folder

Claude Code reads his checklist (ruthlessly specific, encodes Amazon PR/FAQ format, includes AI-specific criteria like “is this differentiated from ChatGPT or is it a commodity AI wrapper”)

The agent reads the document and writes inline comments back into the .docx file, using a Python library to handle the XML under the hood

Comments are strategic, not surface level. Things like “What prevents Datadog or the Big Four from building this?” and “How do you handle misclassification?”

That is already useful. But here is where it gets serious.

Every 30 minutes, a second agent runs. It opens the folder of recent reviews, compares the AI’s output against the version Mahesh actually shipped, and logs the deltas to a learner.md file.

When the same correction appears 5 times across 5 days, the learner emails Mahesh: “I want to update your checklist. Here is the proposed update.”

He reviews, approves, and the checklist evolves.

Every day, the reviewer is a little better than the day before. That is the real moat. Not the initial skill. The learning loop that compounds from your judgment. We’ll go deeper on this in Section 3.

The other things to build in Claude Code:

Competitive intelligence with subagents (3 subagents researching 3 competitors in parallel, rolling up into one report)

Prototypes from PRDs (clone competitor screens, modify them into working products, ship to customers for feedback in days instead of months)

Data dashboards generated from your actual production data

Use Claude Code as your daily driver for weeks 4-6 of the roadmap. By the end, you should have at least 2-3 skills running on your real work, with learner loops on top of each.

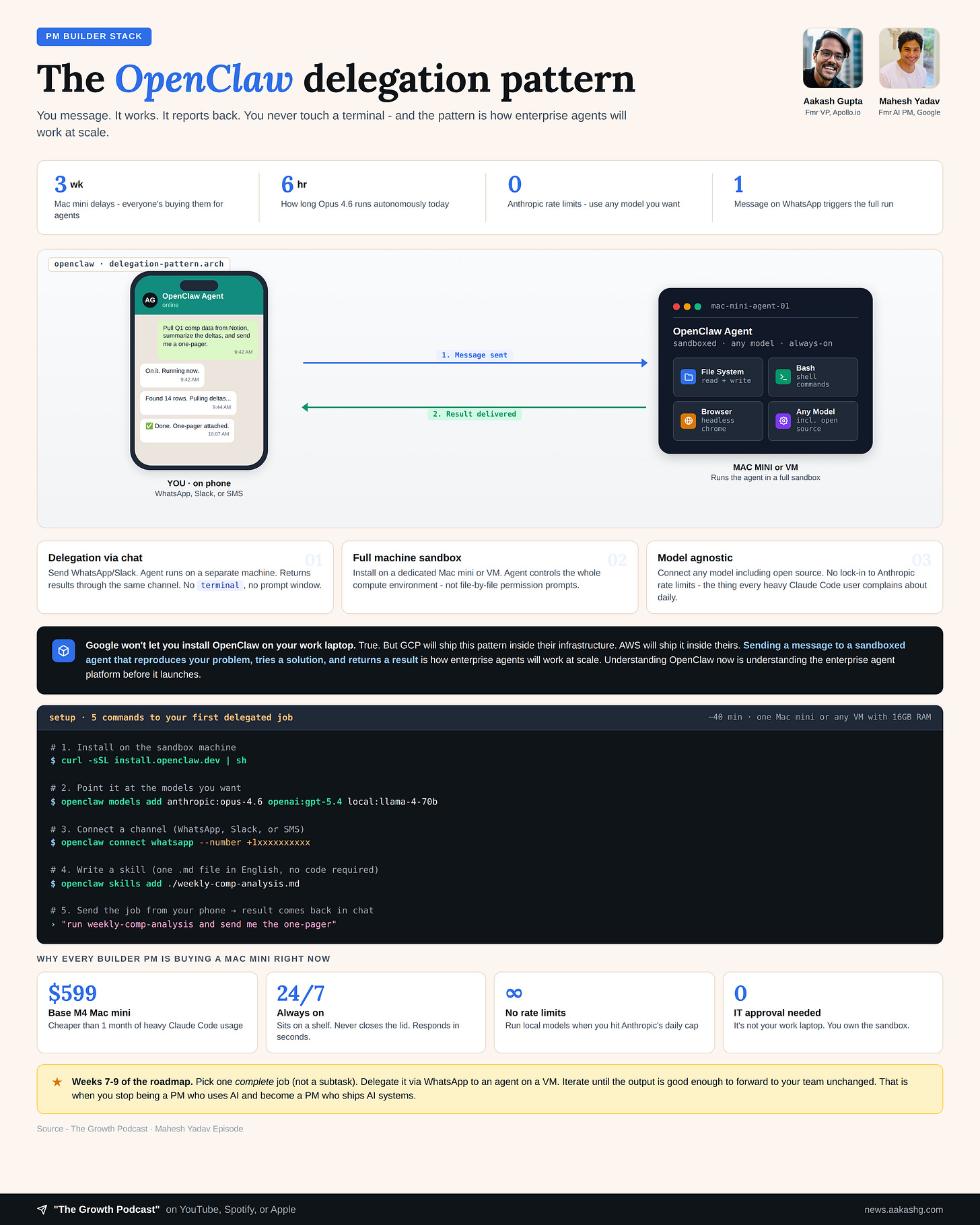

OpenClaw

OpenClaw is a pattern. The pattern matters because enterprise agents will probably work this way at scale. GCP and AWS are already shipping sandboxed-agent primitives, and the architecture maps directly.

Here is what OpenClaw adds that Claude Code doesn’t have:

Delegation through existing channels. You send the agent a WhatsApp message. It goes and does the work on a separate machine. When it is done, it sends the result back through WhatsApp. You are not sitting in a terminal watching it think.

Full machine sandboxing. Instead of granting file-by-file permissions, you install the agent on a dedicated Mac mini or a VM. It controls the entire compute environment. (Side note: Mac minis with 32GB+ are 10-18 weeks out right now, and several high-memory configs are unavailable entirely. CNN reported Apple Store employees calling them “OpenClaw machines.”)

Model agnosticism. Connect any model, including open source. You are not locked into Anthropic’s rate limits, which every heavy Claude Code user complains about daily.

For PMs at big companies asking the obvious question: Google is not going to let you install OpenClaw on your work laptop. That is true. But Google is going to offer the same pattern inside GCP. AWS is going to offer it inside their infrastructure. The architecture of sending a message to a sandboxed agent that reproduces your problem, tries a solution, and returns results - that is how enterprise agents are going to work at scale.

If you’re at a regulated company (finance, health, government, legal) or your data can’t leave the corporate perimeter, the pattern still applies but the venue changes. Your version is Claude Code or an internal agent platform running inside your SSO and VPN. NayaOne-style airgapped sandboxes exist for exactly this reason. The architecture is the same. The compliance envelope is different. Build what you can inside your perimeter and push your infra team for the pattern on the outside.

Learn the OpenClaw pattern now and you’ll recognize the shape of enterprise agent platforms when they arrive.

3. Mastering AI Agents

Tools without first principles is how PMs waste 3 weeks. Here are the first principles.

The first principles of agents

Every working agent has 4 components. Every disappointing agent is missing at least one. Before you debug a prompt, debug the architecture.

Component 1 - Intelligence (The brain)

The model is the intelligence layer. My current default: Opus 4.7 for agent orchestration and skill authoring, GPT-5.4 for fast single-turn tasks where latency matters. You can run either. The rest of the architecture matters more than this choice.

On its own, it is a genius with amnesia. Ask it about neural networks, it answers perfectly. Ask it what Trump said about Iran this week, and if you picked a cheap model, it tells you its knowledge cutoff is June 2024.

The intelligence layer alone gives you knowledge cutoffs, generic answers, and zero session memory. A model without the other three components is just a more expensive way to use Google.

Component 2 - Tools (The hands)

Tools let the agent take actions. Without them, it can think but it cannot do anything.

In the demo, Mahesh added Tavily (a search tool) to the agent. Same question about Iran. This time, the agent searched, found the answer, and responded with current information. One tool turned it from useless to useful.

The essential tools for PM agents are search APIs (Tavily, Perplexity) for current information, file processors to read contracts and PRDs, MCP servers to connect to Gmail, Slack, GitHub, and your CRM, and bash execution to run scripts. PMs who skip this layer wonder why their agent cannot do anything beyond trivia.

Component 3 - Memory (The recall)

Without memory, the agent forgets everything between messages.

The demo showed this failure instantly. The agent searched and answered the Iran question. Two messages later, Mahesh asked “what conflict am I talking about?” and the agent replied that it saw no previous mention of a conflict. Adding session memory fixed it in one click.

Memory has three layers: session memory for the current conversation, long-term memory for patterns across days and weeks, and structured memory for specific facts the agent needs to retrieve reliably. Every PM agent that feels “dumb” is almost certainly missing one of these.

Component 4 - Knowledge (Your data)

This is where your agent stops being a generic chatbot and starts being valuable.

Knowledge = your company-specific context. Contracts, playbooks, competitive intel, product specs, customer research. Without it, the agent answers from the internet. With it, the agent answers from your actual data.

The RAG pipeline is straightforward: upload your documents, chunk them into 1,000-character pieces with 200-character overlap, convert to vectors, store in a database, and query when asked a question.

In the demo, Mahesh asked about payment terms and tariff impacts on company contracts. Without knowledge, the agent gave generic legal advice from the internet. With the MSA uploaded, the agent answered from the actual contract clauses.

That difference is the entire value proposition of building your own agents vs. using a generic chatbot.

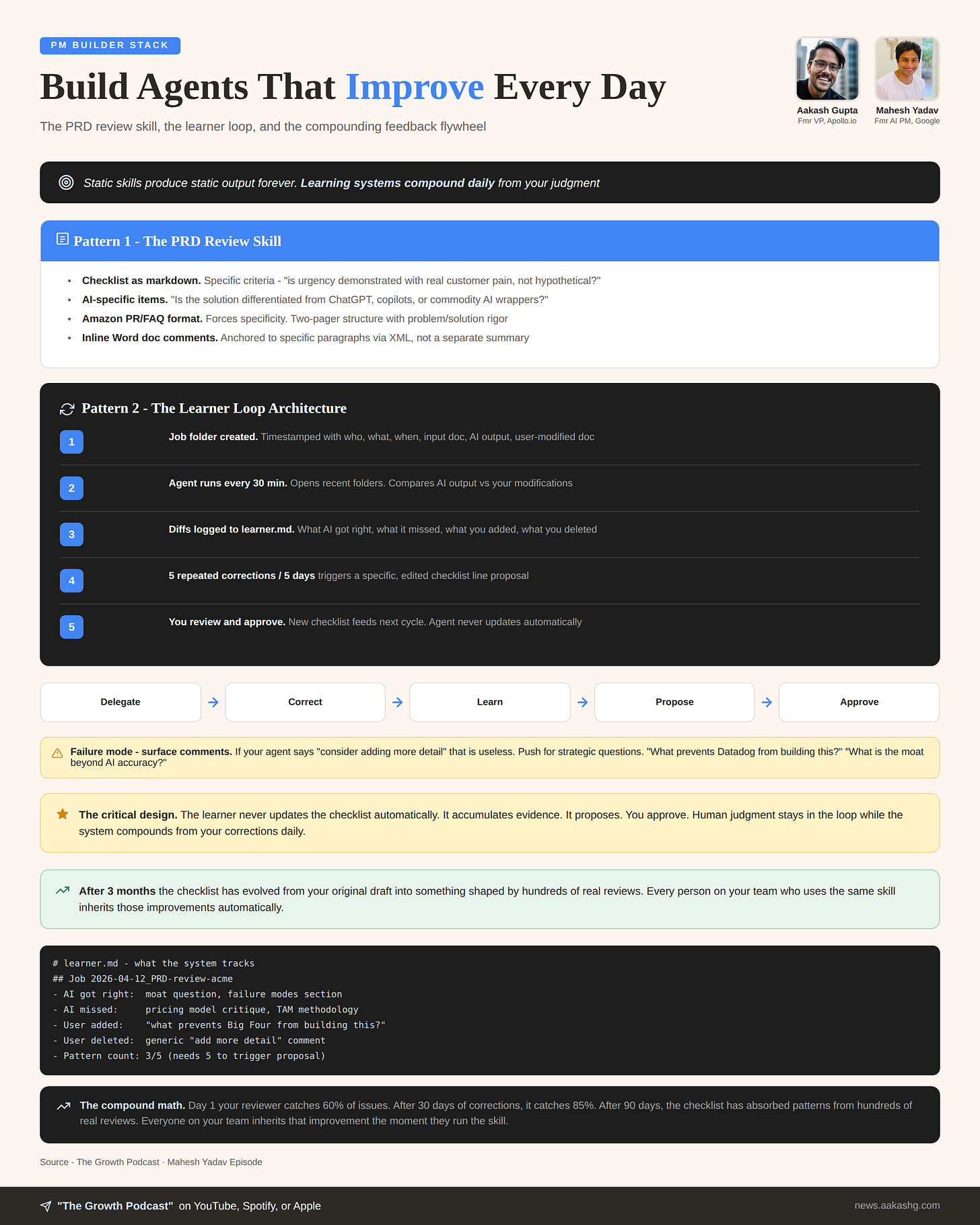

How to build self-improving agents

This is the section almost nobody is doing yet. Which is exactly why it matters.

You automated one task. Your PRD review agent works. Your competitive analysis runs every morning. Then you notice the agent makes the same mistake three days in a row. It misses the same section. It applies the wrong standard.

Static skills produce static output forever. Learning systems compound daily.

Here is the learner loop architecture Mahesh runs on his PRD reviewer:

Step 1 - Every review creates a job folder

Timestamped. Contains:

Who ran the review

The input document

The AI’s output

The version you actually shipped after your corrections

Step 2 - A scheduled subagent runs every 30 minutes

It opens recent job folders. Compares the AI’s output against your modified version.

Step 3 - Differences get logged to learner.md

What the AI got right. What it missed. What you added. What you deleted.

Step 4 - A threshold triggers a proposal

When the same correction appears 5 times over 5 days, the learner proposes a specific checklist update: an actual edited version of the checklist line.

Step 5 - You review and approve

The new checklist becomes the input for the next review cycle.

The critical design decision is that the learner never updates the checklist automatically. It accumulates evidence, it proposes, you approve. You stay in the loop. The system still compounds.

After a month, your review agent catches things it missed on day one.

After three months, your checklist has evolved from your original draft into something shaped by hundreds of real reviews and your actual judgment calls.

Inside a team, you’ll want one canonical version of each skill in a shared repo, with a light review process when someone proposes a merge. Otherwise you get skill drift, five competing PRD reviewers, and nobody knows which one is current.

Static skills are where most PMs stop. The learning systems that compound from your judgment are what separate the builder PM who gets promoted from the builder PM who watched a YouTube video.

4. The 10-week roadmap to Builder PM

You have a full-time job and maybe 5-8 hours a week to learn this. Here is the sequencing, tested with real PMs in real cohorts:

Weeks 1-3, Foundations. n8n only. Build one agent with all 4 components, run one real evaluation, build one multi-agent system. By week 3 you can explain the 4 components to a junior PM.

Weeks 4-6, Build. Move to Claude Code. Pick one real weekly task and automate it with a skill. Add a learner loop on top. Spin up your first subagent system (3 competitors in parallel works well).

Weeks 7-9, Delegate. OpenClaw time. Pick one complete job (not a subtask) and delegate it via WhatsApp to an agent on a VM. Iterate on the skill until the output is good enough to forward to your team unchanged.

Weeks 9-10, See the pattern. Read the AI tool market through this lens. Every new tool: is it solving for context, actions, or evals? Is it a variant of the agentic loop? Could you build it yourself?

The biggest failure mode is skipping Phase 1. PMs who jump straight to Claude Code hit a wall around week 3, have no mental model for debugging, and conclude “Claude Code is overhyped.” Do not be that PM.

That decomposition skill from Phase 4 is also what’s showing up in L5 and L6 AI PM interviews. Based on reports from candidates I’ve coached through Land a PM Job and notes from Blind, some loops now include live building exercises where you’re expected to open Claude Code and produce a working prototype.

Candidates who default to Figma mocks when the interviewer is looking for a working prototype have lost offers. Figma is still the right tool for design work. The signal the interviewer is looking for is whether you can ship.

For hiring managers

In 6 months every PM resume will claim builder skills. Two questions cut through it:

“Walk me through a skill you’ve built and how the checklist evolved.” Real builders have version history. Fakes have a one-liner.

“Show me the learner.md.” Real builders have a file of failures. Fakes don’t.

If a candidate says they’ve used Claude Code but can’t name the four components of an agent or explain when they’d choose n8n over Claude Code, they watched a YouTube video. That’s different from building.

[Bonus] Episode Summary

Ten weeks. Three phases of building. One phase of seeing.

At the end, you are a PM who builds AI systems that get better every day.

In 2026, that is the difference between being automated and being the one doing the automating.

Where to find Mahesh Yadav

Related content

Podcasts:

Newsletters:

PS1. Please subscribe on YouTube and follow on Apple & Spotify. It helps!

PS2. The third cohort of my LandPMJob Program is filling up. Apply now!