How to Price AI Products: The Complete Guide for PMs

Cursor's $7,225 invoice broke the internet. Here's what every AI PM can learn from it.

This tweet got 797,000 views in a week:

$7,225 invoice. 500 requests burned by one developer in a single day. “Is that even legal?”

That was Cursor, the fastest-growing AI startup (that’s not a foundational model company) in history, two weeks after switching their pricing model. The CEO had to publish a public apology. They offered refunds to every affected user between June 16 and July 4, 2025.

And Cursor isn’t even the worst example.

Anthropic quietly introduced weekly rate limits that throttle less than 5% of Claude subscribers. OpenAI started testing ads for free ChatGPT users this month. Replit’s gross margins swung from 36% to negative 14% in the span of months as their AI agent consumed more LLM resources than their pricing covered. Intercom charges $0.99 per AI resolution, and a single company’s bill can swing from $50 to $30,000/month depending on how good the bot gets.

Every AI company is figuring out pricing in real time.

I spent two weeks mapping the pricing models of the top 50 AI startups by valuation with Moe Ali. We read every pricing page, tracked down actual cost numbers, and argued about how to categorize companies that use three models at once. Six distinct patterns emerged. Some of them surprised us.

So we built the pricing guide we wish existed.

Introducing Moe

Moe Ali runs the top-ranked AI PM Certificate on Maven as CEO of Product Faculty:

I took it all the way back in 2024 and learned a ton. It’s great if you’re starting your AI PM journey. Use my discount code AAKASH25 to get $500 off:

Today’s Post

Together, we built the most comprehensive AI pricing guide for PMs on the internet:

The 6 AI Pricing Models (With 30+ Real Companies)

4 Case Studies: Anthropic, Cursor, Intercom, and Replit

How to Pick Your Pricing Model (A Practical Decision Tree)

Pricing in AI PM Interviews

What Makes AI Pricing Different

Before we get into models, you need to understand why AI pricing is uniquely hard.

Traditional SaaS has near-zero marginal cost per user. One more Notion subscriber costs Notion almost nothing. That’s why SaaS gross margins are 70-80%.

AI products are different.

Every time a user sends a prompt, processes a document, or runs an agent, the company pays for compute. A casual Claude user sending 10 messages a day costs Anthropic pennies. A developer running Claude Code eight hours a day costs Anthropic tens of thousands of dollars per month on the original $200 plan, as Anthropic itself acknowledged.

That creates the core pricing tension: your best users are your most expensive users.

AI-first SaaS gross margins run 20-60%, compared to 70-90% for traditional SaaS. GitHub Copilot reportedly lost money per user at launch. Even OpenAI, at $13B+ revenue, burned $8 billion on compute in 2025 and projects $14 billion in cumulative losses by end of 2026.

That’s the context. Now let’s look at how the top companies are actually handling it.

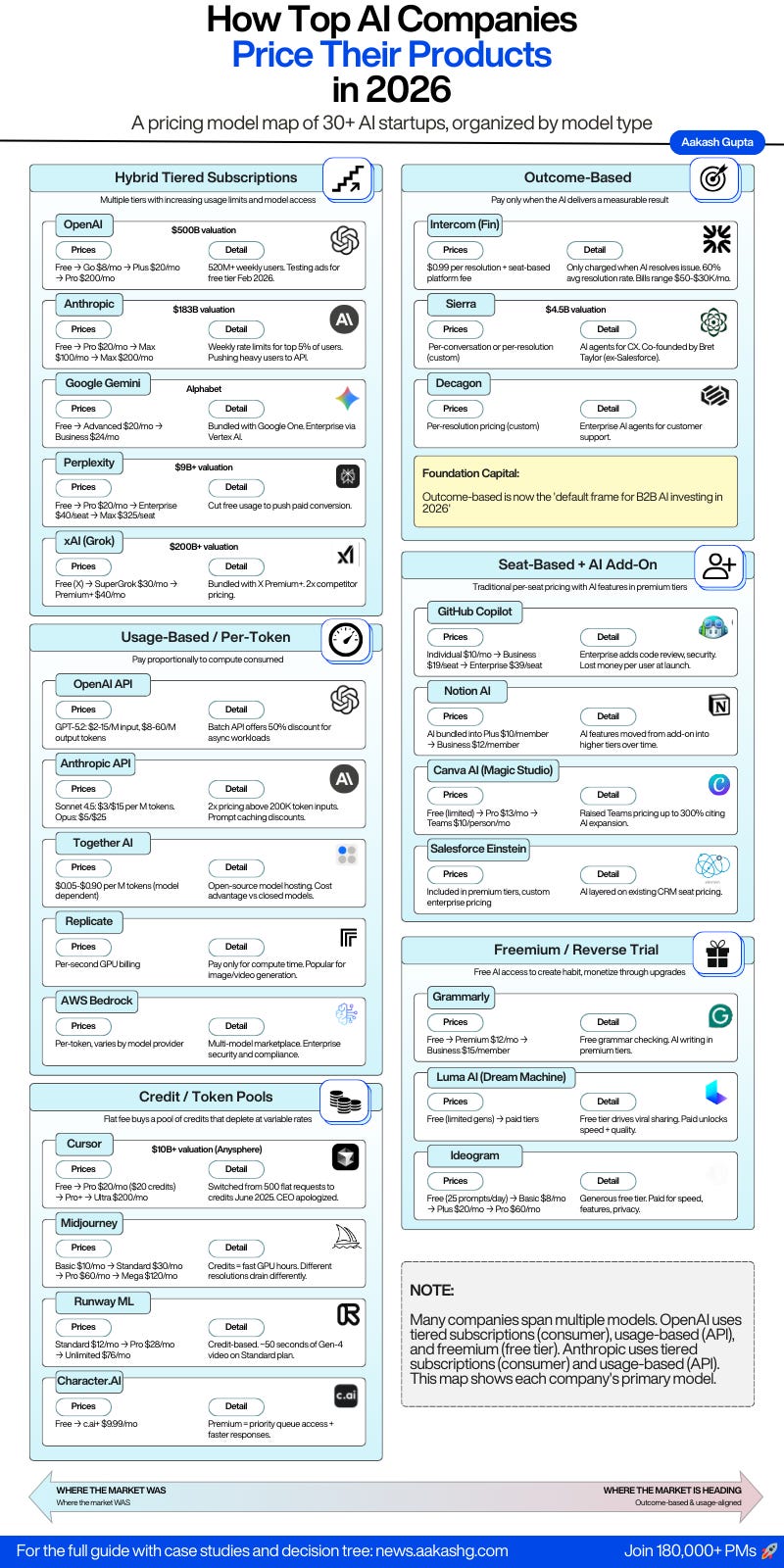

1. The 6 AI Pricing Models (With 30+ Real Companies)

We mapped pricing approaches across the 50 highest-valued AI startups as of February 2026. To understand why Cursor’s $7,225 invoice happened, you need to understand the six pricing models AI companies are choosing between, and why each one breaks differently.

The biggest surprise: nearly half the companies we studied use two or three models simultaneously.

OpenAI runs tiered subscriptions for consumers, usage-based for the API, and freemium for the free tier. Anthropic does the same. Categorizing them forced us to pick their primary model, but the real story is that pure-play pricing is dying. `

Model 1 - Hybrid Tiered Subscriptions

This is the dominant consumer AI model. Multiple tiers with increasing usage limits, model access, and features. It's also the model most likely to change in the next 12 months.

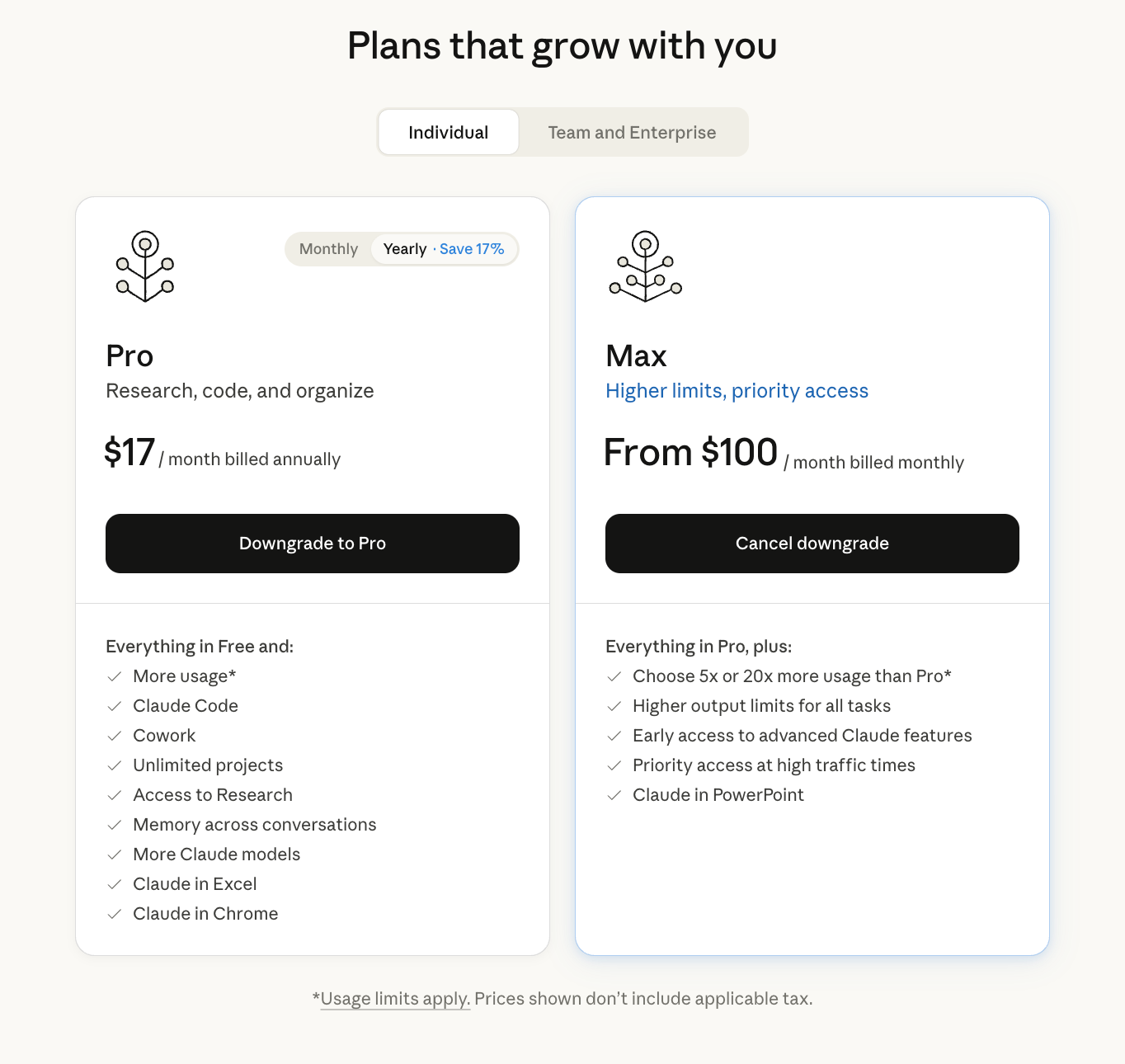

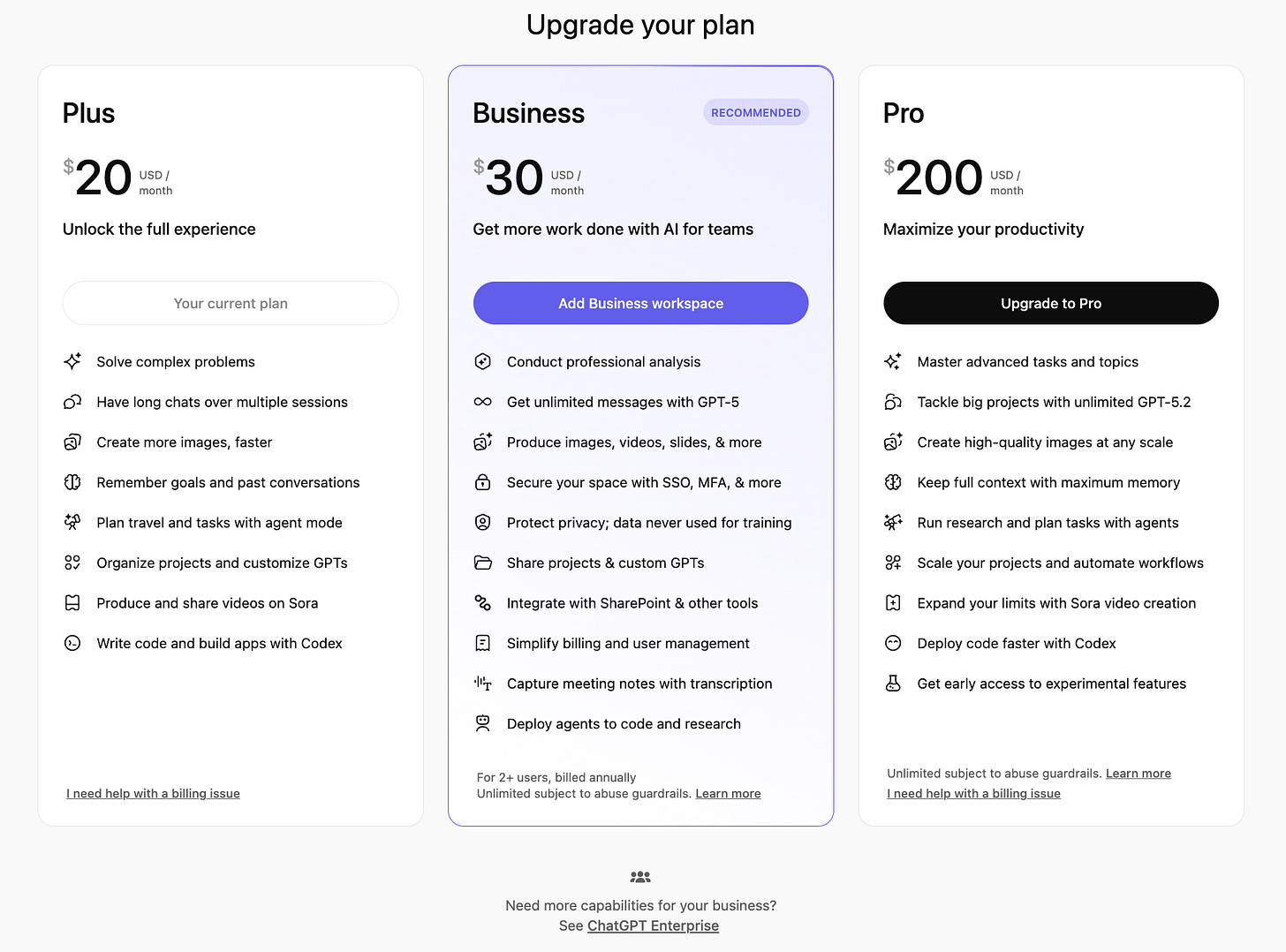

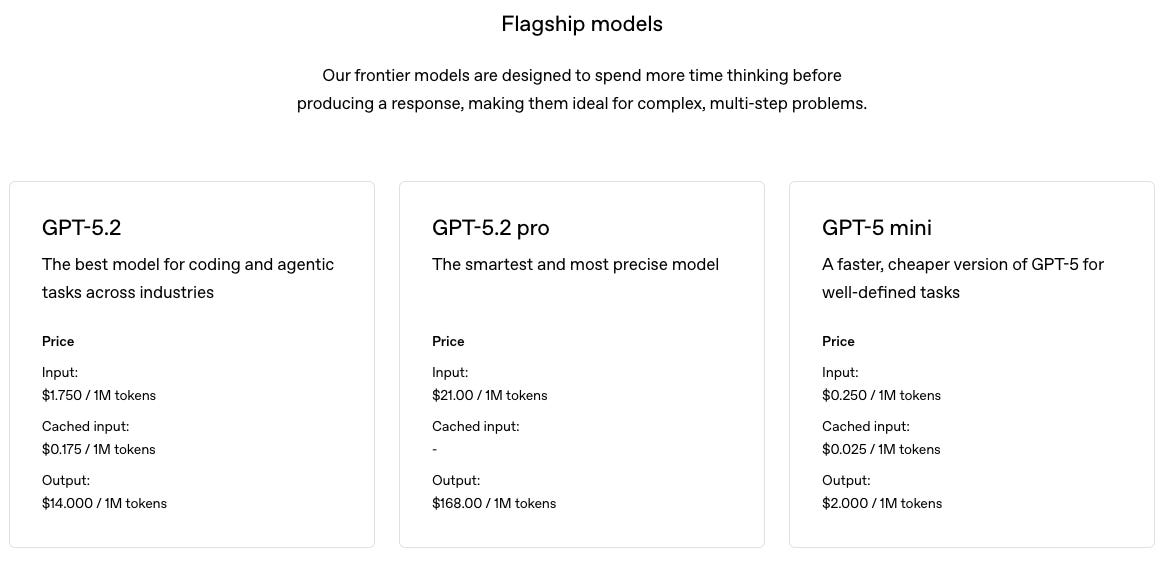

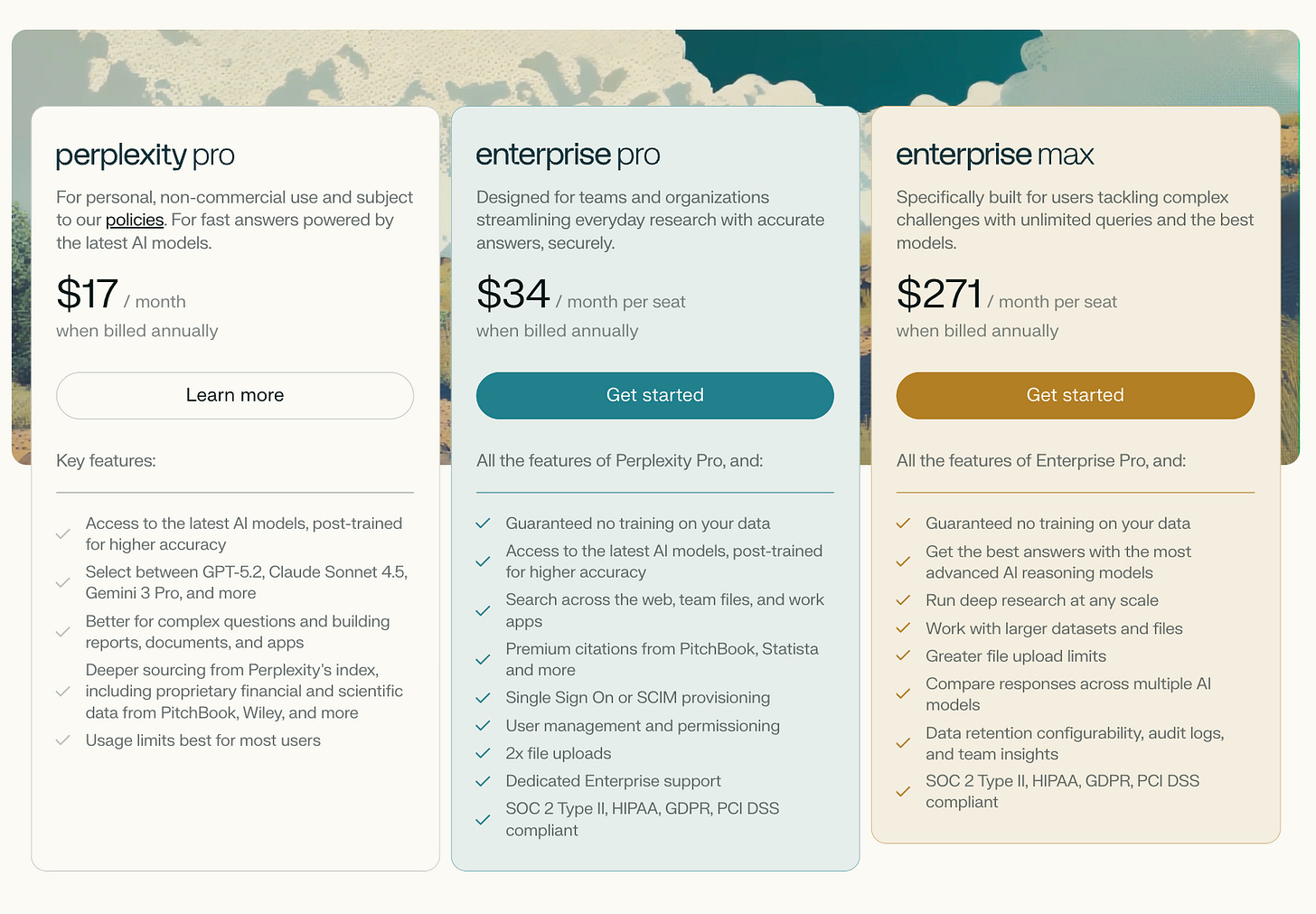

OpenAI and Anthropic converged on almost identical structures. Claude: Free → Pro $17/mo → Max $100/mo → Max $200/mo. ChatGPT: Free → Plus $20/mo → Business $30/mo → Pro $200/mo. Perplexity, Gemini, and Grok all cluster around the same $20 entry point.

This wasn’t coordinated. It evolved because these companies face the same unit economics constraints and the same competitive pressure to match each other’s floor.

The clever part is how both Anthropic and OpenAI deliberately avoid publishing exact usage limits. This intentional opacity lets them manage compute costs dynamically without setting hard expectations that become liabilities.

Moe and I argued about whether this counts as smart pricing or just dodging accountability. His take: it’s both. You get margin flexibility, but you also get users who feel gaslit when they hit a limit nobody told them existed.

If your AI feature has variable compute costs per user, tiered subscriptions let you contain the downside while still capturing willingness to pay at the top. But you need product analytics tracking cost-per-user at each tier to know if your tiers are actually profitable.

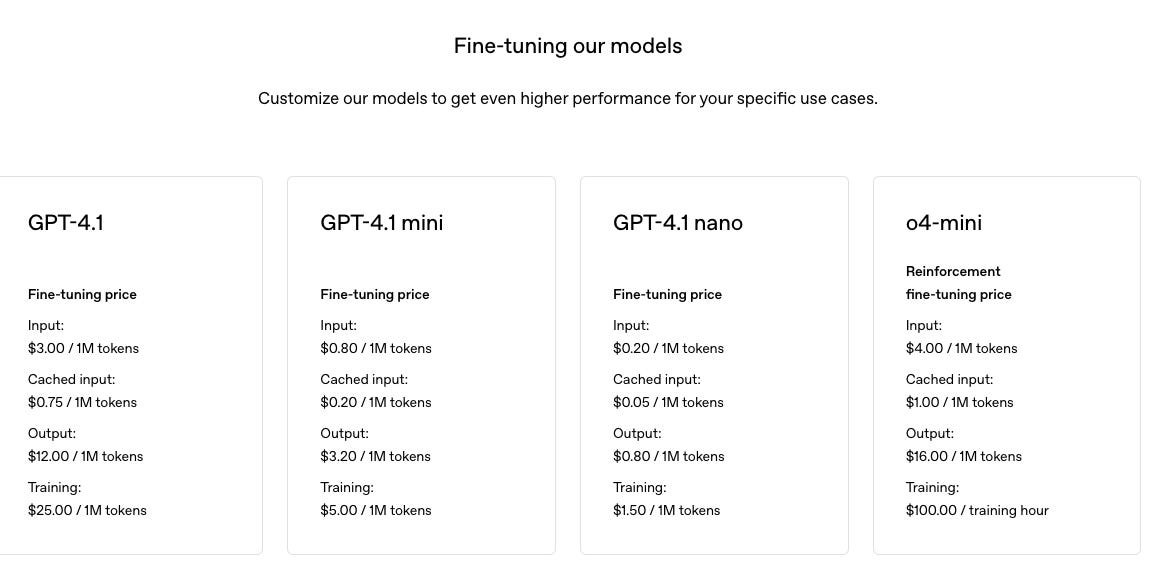

Model 2 - Usage-Based / Per-Token

The infrastructure model. Pay proportionally to compute consumed.

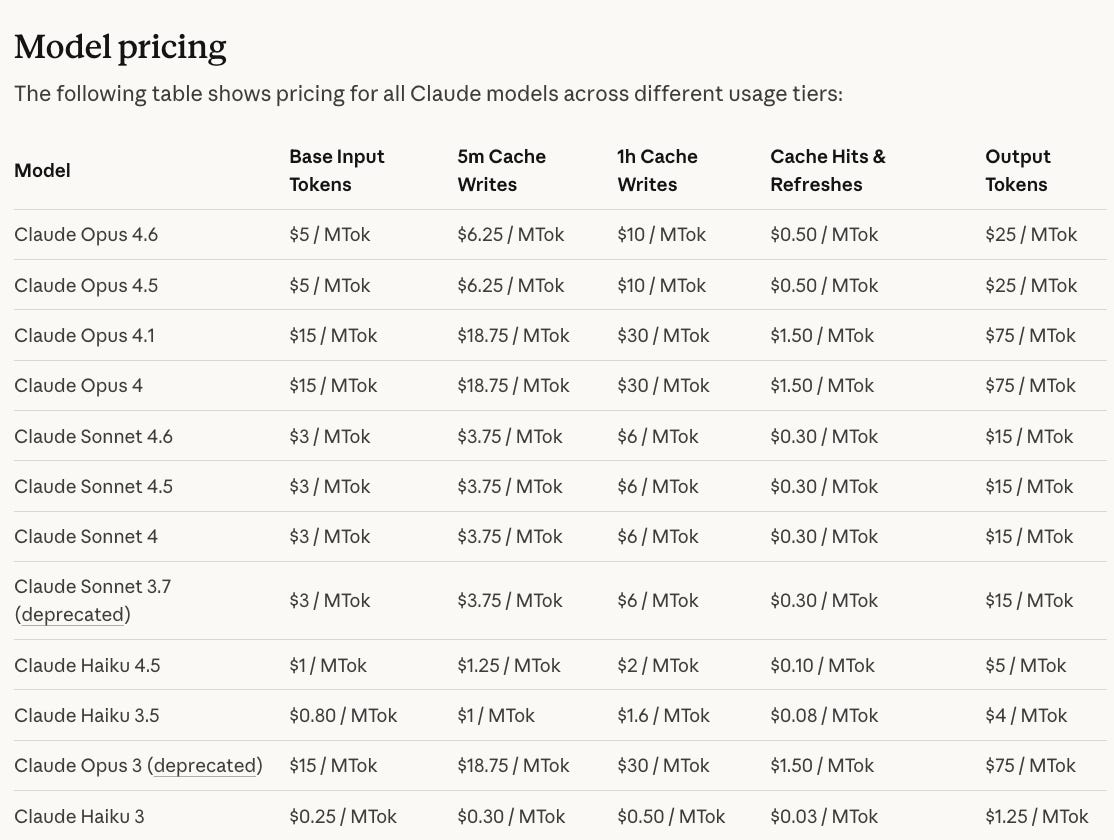

Anthropic’s API charges $3 per million input tokens and $15 per million output tokens for Sonnet 4.5. Cross the 200K token input threshold and the entire request jumps to $6/$22.50, effectively doubling your cost.

That threshold matters enormously when processing long documents or codebases. OpenAI’s Batch API offers 50% discounts for non-real-time workloads, which changes unit economics dramatically if your use case tolerates latency.

Every API call costs compute and every API call generates revenue, so gross margins stay consistent regardless of scale.

The problem is that nobody wants a surprise bill. Developer forums are full of people who ran one batch job and woke up to a four-figure invoice.

And the competitive moat is thin. AI inference costs fell 78% through 2025 for some providers. If a competitor drops their per-token price, customers can switch with near-zero friction.

Usage-based works when your customers are developers building on your platform. They expect it, can optimize for it, and have engineering resources to monitor it. For end users, it creates anxiety.

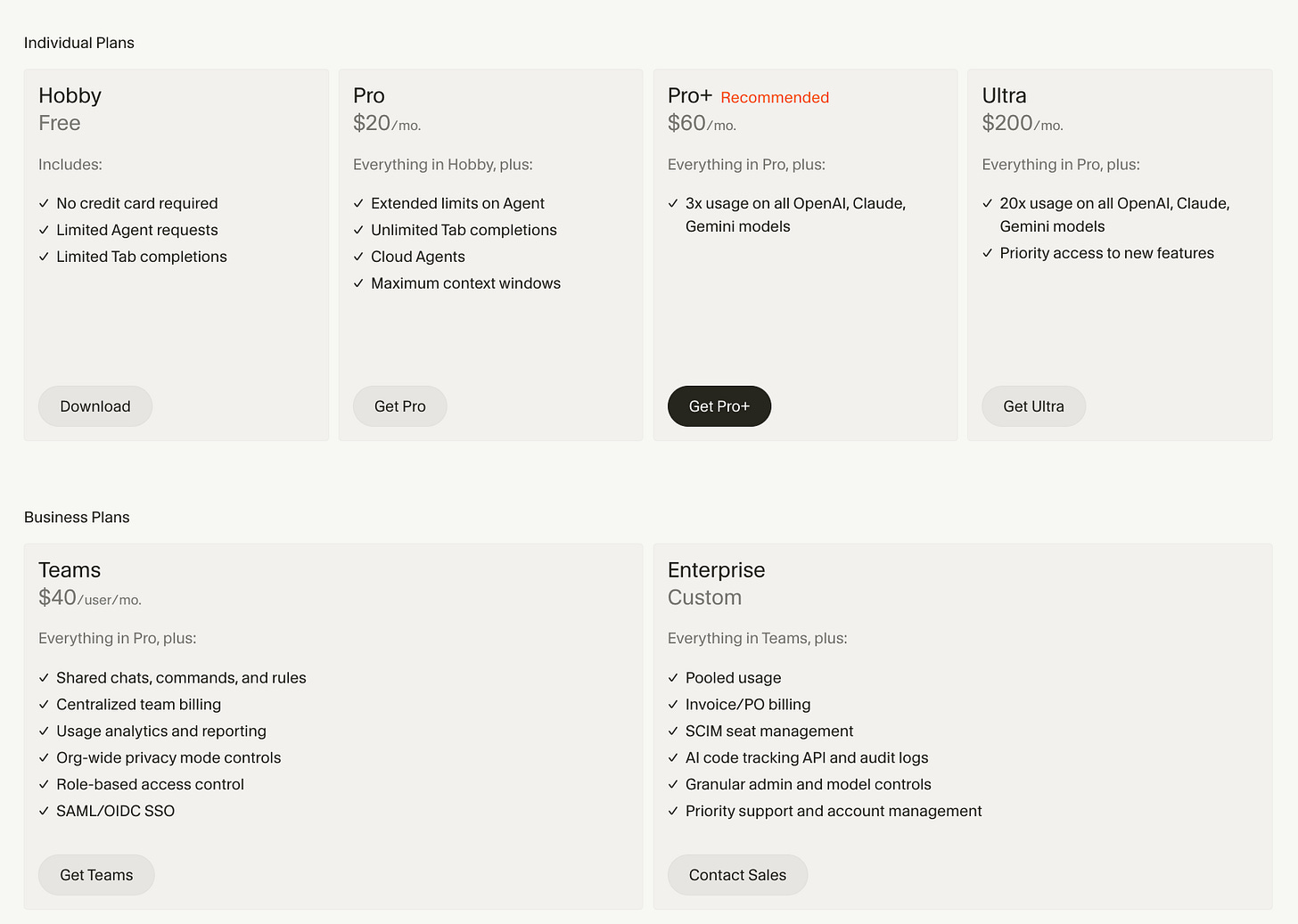

Model 3 - Credit / Token Pools

Flat subscription, but users get a pool of credits that deplete at different rates depending on what they do. This is the model causing the most drama right now.

Cursor is the poster child. Their Pro plan gives you $20/month in credits. That buys roughly 225 Claude Sonnet 4 requests, or 500 GPT-5 requests, or 550 Gemini requests. A simple tab completion costs fractions of a cent. A multi-file refactor with an expensive model can burn $5 in one prompt.

Before June 2025, Cursor charged a flat 500 requests/month. No surprises.

The switch to credit pools caused a developer revolt so fierce that CEO Michael Truell published a public apology on July 4, 2025: “We recognize that we didn’t handle this pricing rollout well, and we’re sorry” (TechCrunch).

Some users reported their entire monthly allocation getting burned through in 2-3 heavy prompts. The plan description was quietly changed from “Unlimited” to “Extended” twelve days after launch.

Midjourney uses a similar approach: subscriptions give you “fast GPU hours” that deplete at different rates depending on resolution and quality settings. Runway ML runs on credits where $12/month covers roughly 50 seconds of Gen-4 video.

User trust is the trade-off, and Cursor’s community backlash shows what happens when you convert a predictable model to a variable one without over-communicating.

This is the model that produced that $7,225 invoice. We’ll tear apart exactly what went wrong in the case studies below.

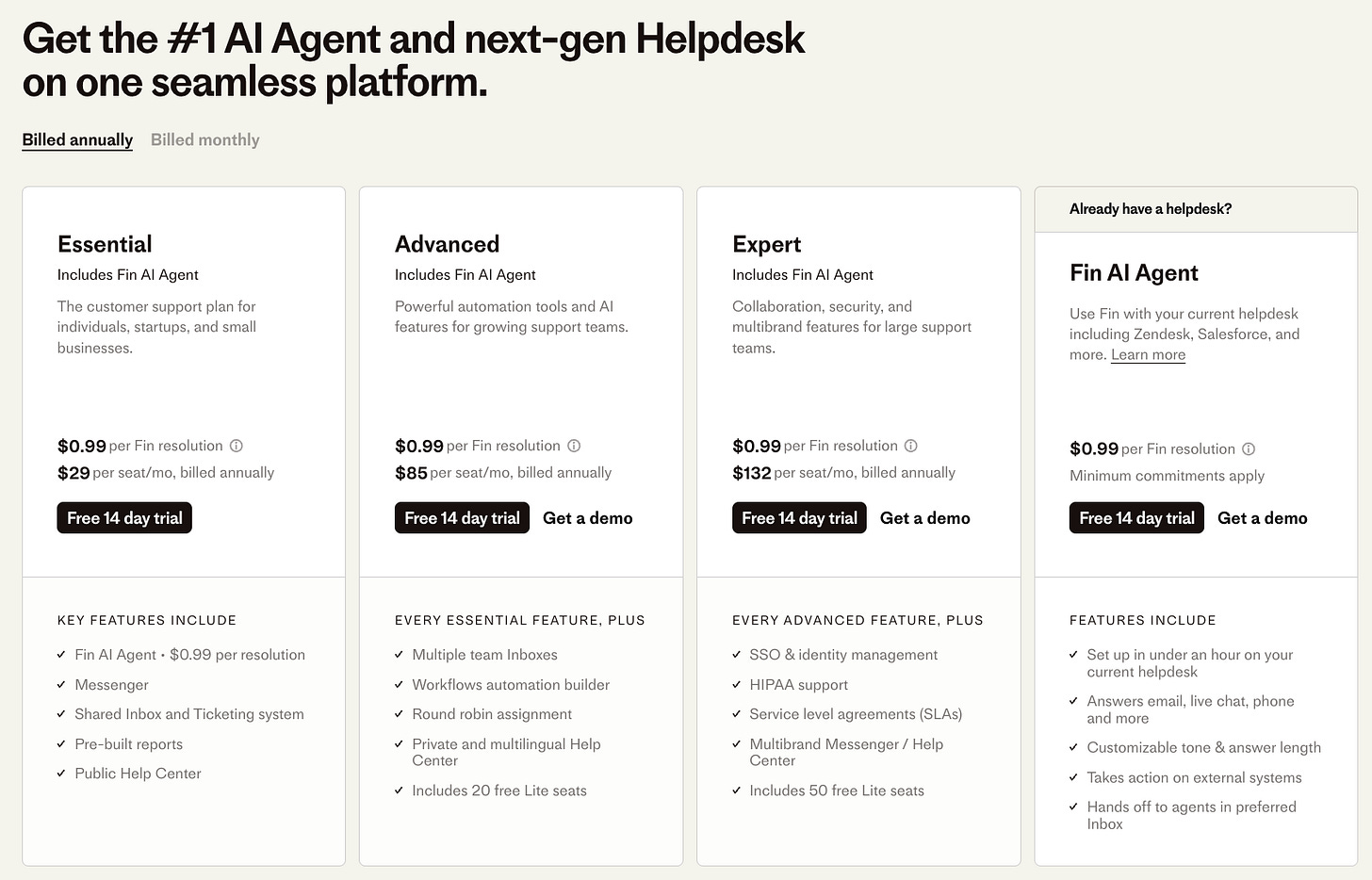

Model 4 - Outcome-Based

The model that gets VCs most excited. Charge based on what the AI accomplishes.

Intercom’s Fin AI agent costs $0.99 per resolution. A “resolution” means the customer either confirms the answer helped, or exits without requesting further assistance. You only pay when Fin solves a problem. If it fails and hands off to a human, no charge.

Elegant in theory. Then you do the math at scale. A company handling 30,000 conversations/month where Fin resolves 60% pays $17,820/month in resolution fees alone, on top of seat-based Intercom subscription. One analysis of a 15-agent setup put total monthly spend at roughly $32,734.

Intercom's counterargument is straightforward: if Fin resolves 18,000 conversations that would have required human agents at $15-25/hour, the customer still comes out ahead. Intercom case studies report companies saving over 1,300 hours in six months with 50%+ resolution rates.

Foundation Capital calls this the “services as software” thesis, and considers it the default frame for B2B AI investing in 2026. Revenue scales with AI performance. But that cuts both ways: if your model has a bad week, revenue drops.

If Cursor had been able to price on outcomes (code shipped, bugs fixed, PRs merged), none of this would have happened. The reason they couldn’t is the same reason most AI companies can’t yet: outcome measurement infrastructure doesn’t exist for most categories.

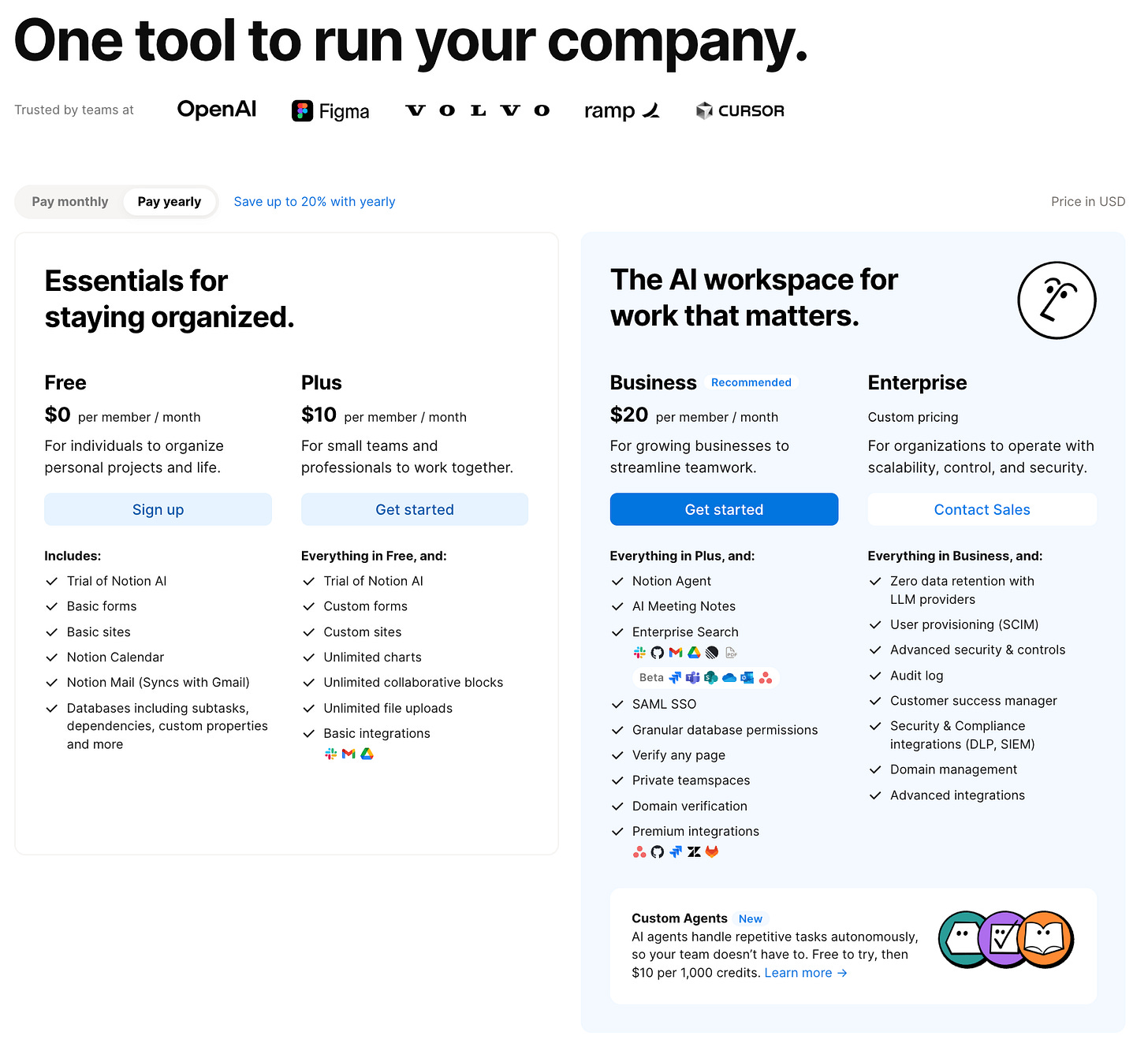

Model 5 - Seat-Based + AI Add-On

The “AI upgrade” path of least resistance for established SaaS. Your base product charges per seat, AI features go into premium tiers or add-ons.

Notion bundled AI features into higher-priced plans rather than selling a separate add-on. Canva raised Teams pricing by up to 300%, explicitly citing AI feature expansion. GitHub Copilot charges $10/month Individual, $19/user/month Business, $39/user/month Enterprise.

Operationally simple. You already have per-seat billing and a sales motion. But your highest-usage users are your most expensive to serve, and they’re paying the same as light users. This is the same dynamic that pushed Cursor to credit pools and Replit to effort-based pricing.

Treat seat-based + AI as a bridge, not a destination. Track per-user compute costs from day one. If your P90 user costs 10x your P50 user, you’ll eventually need usage-based guardrails. Use product metrics to build this visibility before it becomes a crisis.

Model 6 - Freemium / Reverse Trial

Give away AI to create habit, monetize through upgrades.

OpenAI’s free ChatGPT tier serves over 900 million weekly users. They give away GPT-5.2 with strict limits, then convert to Plus ($20) and Pro ($200). In February 2026, they started testing ads for free and Go tier users in the U.S. to monetize the massive free base.

Perplexity’s free tier gives unlimited basic searches but gates multi-step reasoning behind Pro at $17/month.

The economics are brutal. OpenAI burned $8 billion annually on compute in 2025. Most startups can’t afford that bet.

If your free-to-paid conversion is below 2-3%, your free tier is too generous. Consider “reverse trial” instead: full access for 14 days, then downgrade. Users experience premium AI quality before hitting limits, which produces better conversion than a permanently crippled free experience.

If you’re building a PLG motion, this is the most important metric to get right. And if you’re wondering how to actually choose between these six models for your product, the decision tree in Section 3 walks through it step by step, starting with the same cost variance data that would have saved Cursor from their July crisis.

2. Four Case Studies: Companies Getting Pricing Right (and Wrong)

Case Study 1 - Anthropic’s $17/$100/$200 Staircase and the Rate Limit Gambit

Anthropic's pricing evolution shows what disciplined margin management looks like at scale.

When Claude Pro launched at $17/month, it felt generous. Roughly 45 messages per 5-hour window, access to the best models, priority during peaks. But power users, especially developers running Claude Code, were consuming compute far beyond what $17 covered. Anthropic acknowledged losing tens of thousands of dollars per month on heavy users of the original $200 plan (Tanay Jaipuria).

Their response was layered. First, Max tiers: $100/month (5x Pro) and $200/month (20x Pro).

Then in July 2025, weekly rate limits targeting less than 5% of subscribers. The message was subtle: we want heavy usage. But if you're treating a consumer subscription like unlimited API access, we'll throttle you toward the API, where costs are covered precisely through per-token pricing.

That 5% number deserves scrutiny. Anthropic frames it as “less than 5% affected,” which makes the rate limits sound surgical. But that 5% includes the most engaged, most vocal, most likely-to-evangelize users on the platform. Power users who hit rate limits don’t just quietly downgrade. They post about it. They compare alternatives.

For every user Anthropic successfully migrates to Max or the API, some percentage defects to competitors or open-source alternatives. The rate limit strategy works financially, but the long-term retention impact on your highest-value segment is harder to measure than the compute savings.

When Cowork launched in January 2026 (first to Max users, expanded to Pro four days later), Anthropic made the same calculation: agentic tasks eat tokens fast, so push users toward higher tiers or the API.

And then DeepSeek. Their January 2025 launch demonstrated competitive LLM performance at dramatically lower cost.

Anthropic didn’t cut prices. They bet that the ecosystem around Claude (Code, Cowork, Projects, MCP integrations) creates enough switching cost that users won’t leave over a price gap. So far that bet is paying off. The question is whether it holds as open-source models close the quality gap further.

If you’re designing tiers, start by clustering user behaviors, not usage volume. Anthropic’s $17/$100/$200 works because a casual user and a Claude Code developer aren’t “light” and “heavy” versions of the same behavior. They’re different products. The breakpoints should feel like natural product boundaries, not arbitrary volume cutoffs.

🔒 PAYWALL

That’s how Anthropic is navigating the hardest pricing problem in AI. Below: how Cursor broke trust and recovered, how Intercom’s outcome-based model actually works at scale, how Replit nearly killed their margins and escaped, the full decision tree for picking your model, and 5 pricing interview questions with worked answers.

Keep reading with a 7-day free trial

Subscribe to Product Growth to keep reading this post and get 7 days of free access to the full post archives.