Get the Hermes starter kit (PM-built)

Hermes writes its own skills. Inside: 20-min setup, 3 PM workflows, full SKILL files, and the 30-day rollout I followed.

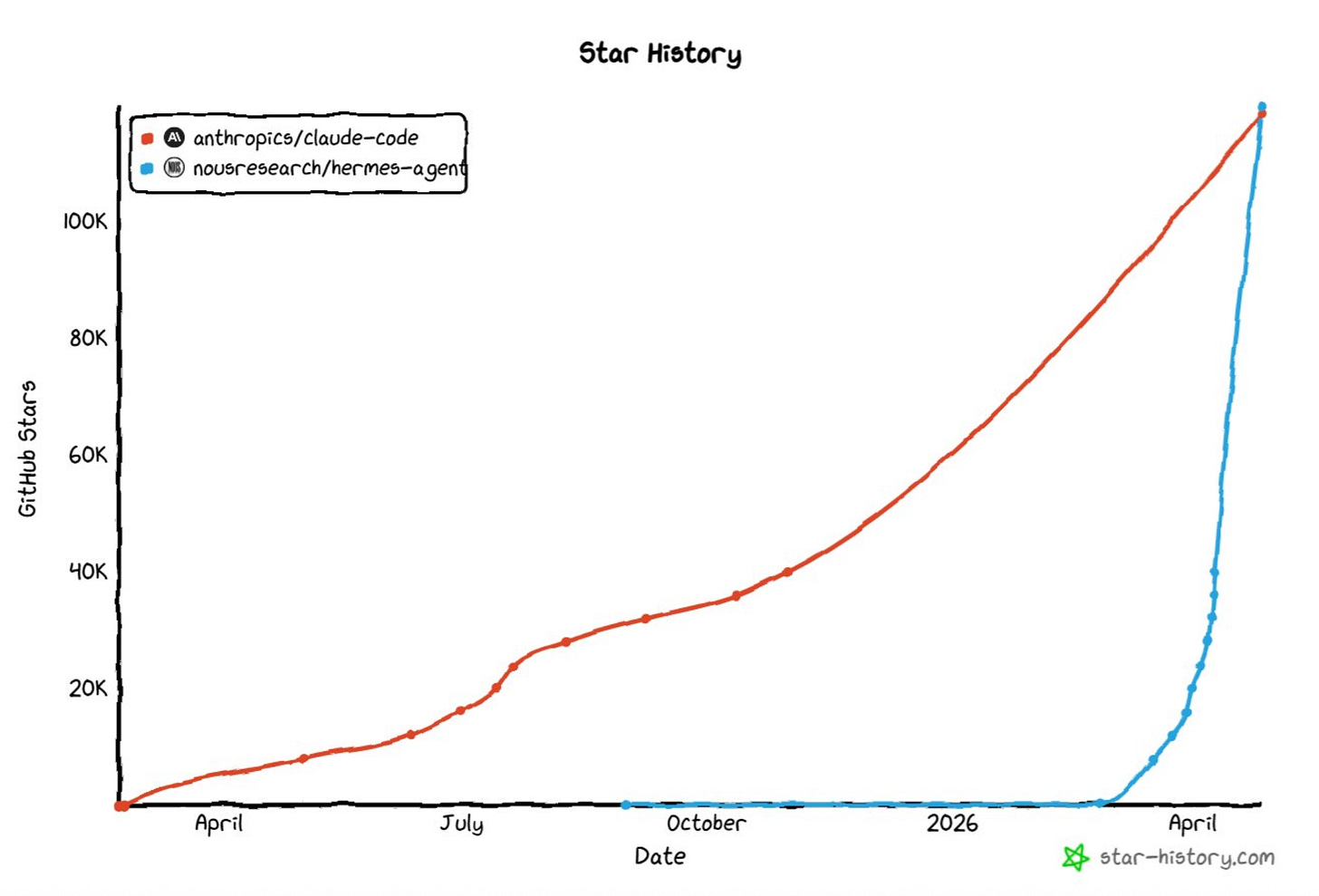

Look at that blue line.

Hermes, from Nous Research, just passed Claude Code in GitHub stars. Seven weeks to cross 100K. Faster than LangChain, AutoGPT, or any agent framework I’ve tracked.

GitHub stars is an engineering metric. So I spent a month figuring out what actually matters for PMs.

Some of the migration was security-driven (OpenClaw had 512 vulnerabilities and 335 malicious skills documented in January). That explains the timing. It doesn’t explain why people stayed.

I set up a competitive monitoring workflow on a Tuesday. Same prompt every Monday for six weeks. By week six, the briefing was surfacing competitor patterns I hadn’t caught in three weeks of doing it manually. The prompt never changed. The skill underneath rewrote itself four times.

That’s the gap I’m going to show you today. The Monday Gap - the difference between a static skill library and a self-improving one. Widens every week.

Why Hermes Matters for PMs

Every AI tool you use has the same gap. Every Monday, you re-explain who you are, what you’re working on, which competitors matter, what you decided last quarter. Custom instructions help. A Claude project helps. My PM OS helps. They all share one limit: the skills and prompts you wrote are static. They produce the same output in week 50 as week 1.

Hermes writes its own skills.

Every 15 tool calls, it pauses, looks at what worked in the session, and saves a workflow file to ~/.hermes/skills/. You can read it, edit it, delete it. My competitive briefing skill from week one isn’t the one running today. The agent rewrote it four times.

Numbers from my logs: same task took 20 minutes week one, 12 minutes week four, 8 minutes by week six. Same prompt. Same outputs. The agent stopped rediscovering the procedure every Monday.

The model isn’t where the value lives. Hermes runs Claude, GPT-4o, Gemini, or local Llama. Same skills, your choice of model. If Anthropic rate-limits you mid-launch, you have a fallback. If a cheaper model gets the job done, you save the spend.

Three things make Hermes worth the 20-minute setup on top of what you already have:

Skills that rewrite themselves based on what worked in your last 10 sessions

Model-agnostic runtime so the same skills run on Claude, GPT, or local Llama

One agent across Telegram, Slack, WhatsApp, Discord, Signal

What’s in the toolkit (paid subscribers):

3 SKILL.md files (competitive-intel, signal-log, decision-log)

SOUL.md template (PM persona, pre-filled)

USER.md template (with auto-update fields)

30-day rollout plan (week 1, 4, and 8)

Drop the folder in ~/.hermes/skills/ and the skills load automatically.

Today’s Deep Dive

The Setup (20 Minutes, Then It Runs Forever)

3 Use Cases for PMs to try today

How self-learning agents look at the end of 30 days

Three things I’d tell you if you were starting Monday

Where to Start

The Toolkit (3 SKILL files + templates + 30-day plan)

Honest Limitations

Keep reading with a 7-day free trial

Subscribe to Product Growth to keep reading this post and get 7 days of free access to the full post archives.